Let me start with the most absurd part: this post itself was born from AI.

I watched a YouTube video, felt something crack open in me, dictated the feeling out loud, and then handed it to an AI agent to help turn it into an article.

In other words: I am using AI to write about the fact that AI makes me afraid.

Yesterday, while scrolling YouTube on my phone, I came across NetworkChuck’s new video: “I almost quit YouTube…”

It felt completely different from his usual content. It was not about tools, not about homelabs, not about some new technical trick or career advice. It was about something much harder to name: the first time a person who genuinely loves technology begins to feel afraid of technology itself.

What struck me was not that he represents the elite edge of the industry. In some ways, the opposite is what made it hit so hard. He feels closer to the many IT enthusiasts and technical workers who started from a low rung and kept climbing through curiosity, obsession, self-teaching, and sheer stubbornness. Not a godlike figure speaking from above, but someone who grew with technology because he loved it.

So when a person like that says, “I hate AI, but I love AI,” it lands differently. It does not sound like a thesis. It sounds like recognition.

I understand that contradiction completely.

Every previous wave of technology brought a familiar kind of pressure. You worried whether you were keeping up, whether you needed to learn a new stack, whether you had fallen behind a new paradigm. But that anxiety still carried excitement inside it. The assumption was always that if you kept learning, kept building, kept adapting, you would still have a place at the table.

That is why many of us in tech never really thought of ourselves as the kind of people vulnerable to FOMO. We were not outside the wave, panicking about missing it. We were already in it.

But this does not feel like FOMO.

It feels more like a dim but persistent intuition: not that I might miss the future, but that something familiar to me may be ending altogether.

What hit me about this video was not that it told me something new in a literal sense. I have already read countless warnings from every angle:

- Anthropic’s work on labor displacement and economic impact;

- public worries from figures like Geoffrey Hinton and Ilya Sutskever;

- the essay The 2028 Global Intelligence Crisis, which helped trigger a market shock;

- Steve Yegge’s The AI Vampire;

- and pieces like https://shumer.dev/something-big-is-happening.

But those pieces mostly talk about trends, risks, systems, labor markets, and macro consequences.

This felt different.

This felt like a person very much like me saying, in the bluntest possible way: this time is different.

That feeling brought two things to mind.

First: growth curves have become concrete, visual, and terrifying

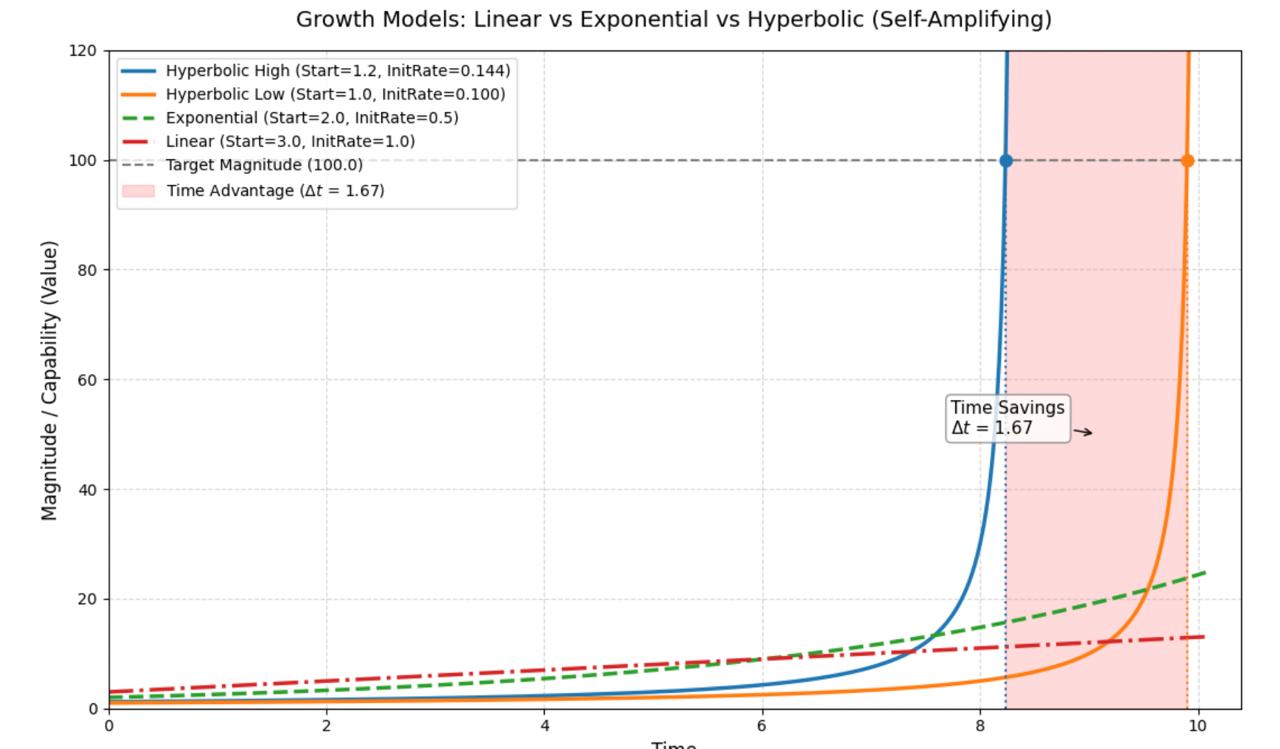

Earlier that same day, I used Gemini to help me write a small Python program to draw three kinds of growth curves:

- linear growth

- exponential growth

- hyperbolic growth

At first I only wanted to visualize a familiar abstract idea: different growth patterns eventually lead to radically different outcomes.

That “growth” could stand for many things: wealth, cognition, skill, influence, leverage, learning speed, or any accumulated advantage. In my head, I mapped it to something simple: the growth of an engineer’s capability.

Seen that way, the curves start to look like metaphors for different technical eras.

Figure 1: The most unsettling part is not merely that linear growth loses to exponential or hyperbolic growth. It is that two hyperbolic curves can still diverge dramatically when their starting points differ only slightly.

Linear growth: getting better at repeating labor

Linear growth reminds me of traditional manual operations work.

You do more, you learn more, you get faster, steadier, and more reliable. Your capability does increase, and early on it may even look impressive because you have real experience and scar tissue.

But the problem is structural: its mode of growth guarantees that it will eventually be surpassed.

If improvement still depends mainly on your own repeated expenditure of time and attention, it stays fundamentally slow.

Exponential growth: automation, systems, leverage

Exponential growth looks more like the classic technical win condition of the last couple of decades: automation.

Scripts, pipelines, monitoring, platformization, infrastructure as code—what makes them powerful is not just that they replace one manual task. They let capability scale with the system itself.

The bigger the environment, the greater the advantage. The more repetition, the more outsized the return.

For many engineers, a critical career transition was basically this:

stop doing a little more yourself, and start building systems that let the same person achieve a much larger output.

Hyperbolic growth: human capability amplified by AI

What unsettles me most is hyperbolic growth.

In my chart, I drew two hyperbolic curves. Their starting values differ only slightly. But after some point, one of them shoots upward almost vertically and leaves the other far behind.

That, to me, is the most frightening part of the AI era.

It is not just telling us that:

- linear growth loses to exponential growth;

- exponential growth loses to AI-amplified growth;

- people who refuse AI will fall behind.

We already know all of that.

What is suffocating is this: even if everyone is trying desperately to use AI, the gap can still widen almost immediately.

So the anxiety is no longer just “am I on the train?”

It becomes:

even if everyone boards the train, some cars may still disappear over the horizon almost instantly, while others are left behind with no meaningful way to recover.

That is the emotional core behind what people now describe as downclassing.

And there is an even more frightening point hidden behind the chart.

The hyperbolic curves in my figure still begin relatively gently. In other words, the visualization is actually a softened version of reality. It assumes that AI-driven amplification still has some ramp-up period.

But what if the real growth rate is already far steeper than the picture suggests?

What if AI is not giving us a future hyperbolic curve, but something that already looks nearly vertical?

In that case, whether your initial value is 1 or 100 may barely matter. Before the difference has time to express itself, both trajectories could already be rushing toward the ceiling of the field.

That creates a new kind of fear.

Not just “am I growing fast enough?” but “what if everyone is now growing so fast that the system no longer rewards growth at all, and starts eliminating the need for people instead?”

The horizontal line is the truly frightening part

In that chart, I also drew a horizontal line.

It looks simple, but it represents something brutal: the ceiling of a domain, or the boundary condition for a role’s existence.

In the figure, both hyperbolic curves are strong. The issue is not whether they grow. The issue is who touches the line first. Once that happens, the difference stops being merely about productivity. It becomes a difference in opportunity, bargaining power, attention, and eventually whether you still have a place in that field at all.

When a curve touches that line, it means there may no longer be room for more human labor of the old kind.

Put more bluntly:

the moment of contact is not “you were not good enough.” It is “this role may no longer need the old version of you.”

That is why charts like this feel more frightening to me than macro labor reports. A report keeps the fear abstract. A curve makes it local.

And if the real growth rate is even steeper than the chart, then that horizontal line stops looking like a distant boundary and starts looking like a pane of glass we are about to smash into.

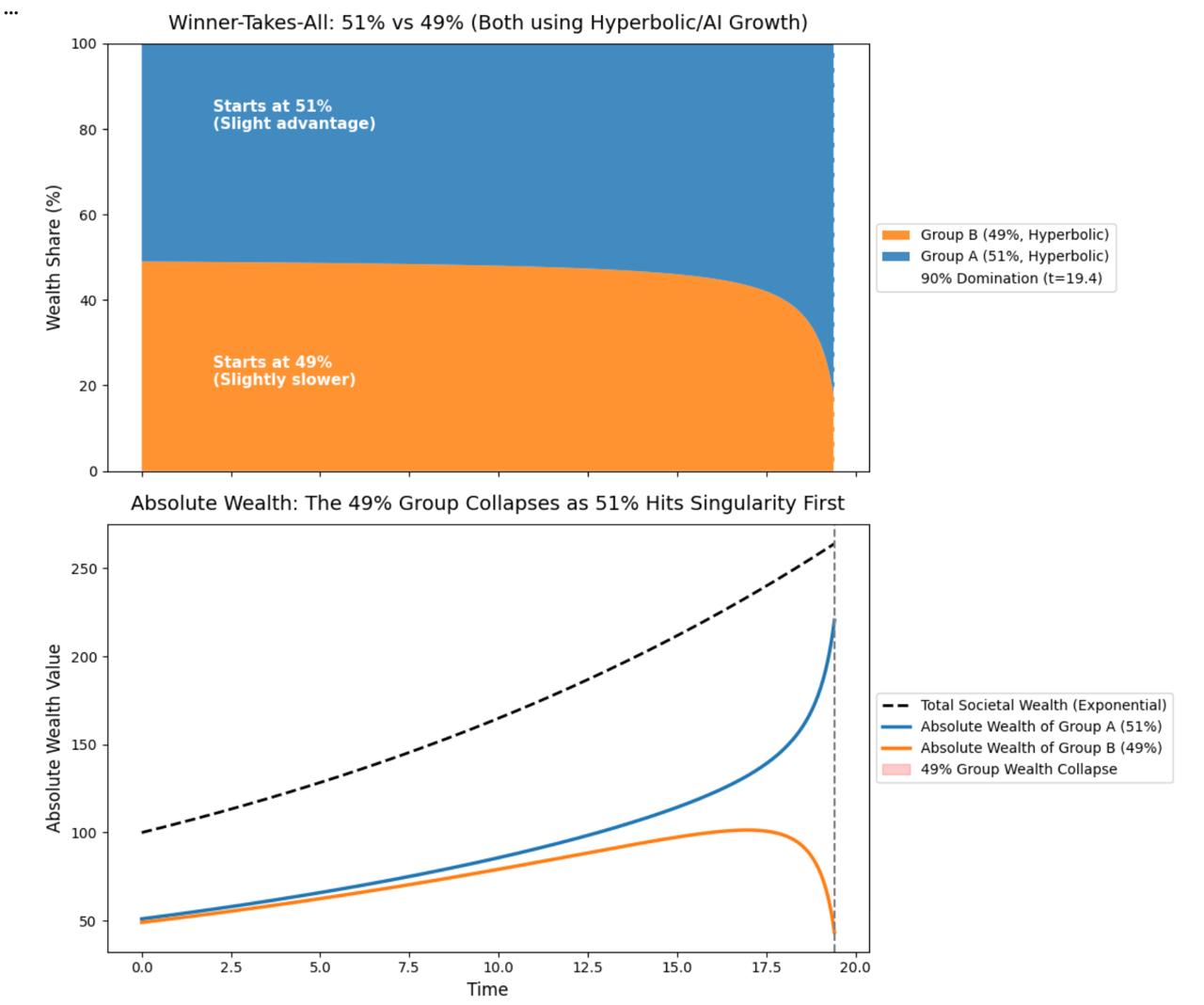

Second figure: zero-sum competition is more brutal than market growth

I then asked Gemini to draw another figure to illustrate an even harsher possibility: the market can keep growing while competition becomes more zero-sum at the individual level.

The setup is simple:

- the total market still expands exponentially;

- there are two participants with initial shares of 51% and 49%;

- both benefit from the same hyperbolic growth pattern.

Intuitively, that seems like a tiny difference—almost negligible.

But the chart makes the cruelty visible: after a threshold point, the participant that started with only a slight edge effectively swallows almost the whole market.

That is what genuinely chills me, because it turns “winner-takes-most” from a business cliché into a lived survival pressure.

So the AI era may not simply mean “everyone gets more productive, and therefore everyone does better.”

It may also mean:

- the total pie grows;

- returns concentrate harder toward a tiny minority;

- small early leads get amplified into structural dominance;

- and the rest, even if they are talented and even if they use AI too, gradually lose their place.

If that is true, then downclassing is not just internet slang. It is the outline of a new stratification.

Figure 2: If both sides are using the same AI amplifier, the decisive variable may not be whether someone adopts AI at all. It may be the tiniest difference in starting position. The result is not mutual flourishing, but a more total kind of capture.

The truly absurd part: I immediately dictated this feeling to AI

The second thing this all made me think of feels even stranger than the graphs.

I did not sit down at a desk, outline an argument, gather sources, and slowly work toward an essay.

Instead, I was scrolling YouTube on my phone, got hit by that video, and almost instantly felt the urge to preserve the feeling before it dissolved.

And what I did next was not to open a notebook and struggle my way through language by myself. I started speaking. The speech was transcribed. Then I handed it to an AI agent to shape into something publishable.

That is where the absurdity lives.

I am describing the fear AI produces, while relying on AI as the medium, the process, the speed, and the amplifier of that very description.

The emotion does not emerge first and then get reflected on from outside the system.

The moment it appears, the system is already there—capturing it, structuring it, polishing it, publishing it.

This is not irony. It is worse than irony.

It is the realization that we no longer stand outside the system in the first place.

Maybe the deepest fear is not even “will AI replace me?”

Maybe it is this:

AI is beginning to replace the distance between me and my own feelings.

There used to be friction between feeling something and turning it into language. Time. Resistance. Clumsiness. Reflection.

Now the feeling arrives and can almost instantly become text, structure, output, content.

The efficiency is astonishing. But that is exactly why it feels uncanny. Even the act of noticing what I feel is being accelerated by a system.

And that brings me back again to NetworkChuck’s line: “I hate AI, but I love AI.”

What makes that sentence feel so true is that it is not rhetorical. It is experiential. You cannot help but be drawn to the power of it, and you cannot help but feel threatened by it. You know it expands your capability, but you also begin to suspect that what it expands most reliably may be the speed of elimination itself.

Then, right as I was about to send this off, I saw the day’s news push

At almost the exact moment I was preparing to hand this material to the agent, another OpenClaw news agent sent its daily digest.

A few items felt painfully sharp in this context:

- growing concern over AI-related psychological harm, including connections to suicide cases and mass-casualty incidents;

- developers discussing whether there is a market for AI-generated public-account articles;

- communities continuing to obsess over models, wrappers, cost, quality, and replacement.

None of those items are new in isolation.

But against the theme of “a technology enthusiast becoming afraid of technology itself,” they become part of the same atmosphere.

AI is no longer just a tool story, no longer just a coding-assistant story, no longer just a productivity story. It is moving into psychological boundaries, professional boundaries, expressive boundaries, even the boundary of meaning itself.

That is what disturbs me most.

We used to welcome technology into life because we assumed it expanded human possibility.

Now, more and more often, the first feeling technology evokes is no longer “I can do more,” but “how long until I am no longer needed?”

What remains is not a conclusion, but a growing sense of ending

To be honest, I do not really want to pretend anymore that I am still standing in some cool, rational, analytical place from which this can be discussed as a trend.

What I feel more strongly right now is not a conclusion. It is an atmosphere.

After publishing the Chinese version of this piece, I went back and checked some of the technical YouTubers I used to follow for years. I wanted to see how many of them were still updating.

The answer was simple: most of them are gone, or effectively gone.

Some still upload, but I no longer feel I need to follow them. Not because they got worse, exactly, but because the whole content landscape feels like it is collapsing inward. People who once felt different now feel increasingly interchangeable. Channels that once fed different kinds of curiosity now seem to converge on the same themes: what I built with AI, what I automated with AI, how I compressed another domain of human effort with AI.

Even the range of technical creators I still pay attention to has narrowed over the last few years.

I do not think this is only algorithmic, or just a matter of my taste changing. It feels like a broader contraction.

Technical content is still growing, but the technical world itself feels more and more singular.

There are more tools, faster expression, greater output—and yet I increasingly feel a strange poverty in it all. Everything is accelerating. Everything is amplifying. And at the same time, everything seems to be narrowing.

So what I want to leave behind here is not really a reasoned conclusion.

It is simply this feeling:

a world of technology that I once found deeply familiar, trustworthy, and alive may be ending.

Maybe not literally disappearing. But the part of it that gave me wonder—detours, patience, handcrafted messiness, the awkward but real joy of exploration—feels like it is ending.

What may remain is a stronger system, higher efficiency, fewer people, more centralized and homogenized output, shorter reaction times, and a pressure that is harder and harder to escape.

I do not know whether this is excessively pessimistic. I do not know whether, years from now, this feeling will look naive.

But I do know this:

this is not ordinary anxiety.

It feels more like the first moment in my life when, as someone who was almost instinctively optimistic about technology, I began to suspect that the thing I loved might not be leading me toward a wider future at all.

It might be carrying me—and many people like me—toward the edge.